A Taste of Rust

By Evan Miller

May 14, 2015

Update: Steve Klabnik has responded to some of the issues I brought up here. It looks like I did not fully appreciate Rust’s optimization levels, among other things. When you’re done with this, go read his comments!

The word rust is the last thing I want to think about when I am hungry and/or fantasizing about building something that will endure through the ages. As a name for a programming language, “Rust” doesn’t exactly inspire confidence, but to take your mind off of ancient AMC Pacers and eating a bucket full of rusty nails, I’ll let you in on a couple of folk etymologies for the language, only one of which I just made up.

The first etymology is an apocryphal IRC chat log from the language’s creator. It is not made-up, at least not by me.

<graydon> I think I named it after fungi. rusts are amazing creatures.

<graydon> Five-lifecycle-phase heteroecious parasites. I mean, that’s just _crazy_.

<graydon> talk about over-engineered for survival

<jonanin> what does that mean? :]

Isn’t a death-resistant parasitic fungus so much better to contemplate than tiny bits of corroded metal lacerating the insides of your mouth?

The second etymology is my own interpretation, but I think it works well — so well, in fact, that after reading the next three paragraphs you’ll probably forget all about ineradicable fungal diseases, as well as the tetanus-infused blood dribbling down your chin.

If you’ve ever seen a dark-brown skyscraper, it was probably made from a special steel called Corrosive Tensile Steel. Corten steel (sometimes spelled, with all the guile of an early programming language, COR-TEN) is deliberately designed to oxidize; the resulting rust forms a protective, weather-proof coating on the steel, so from an engineering perspective, the building never needs to be painted (or eaten).

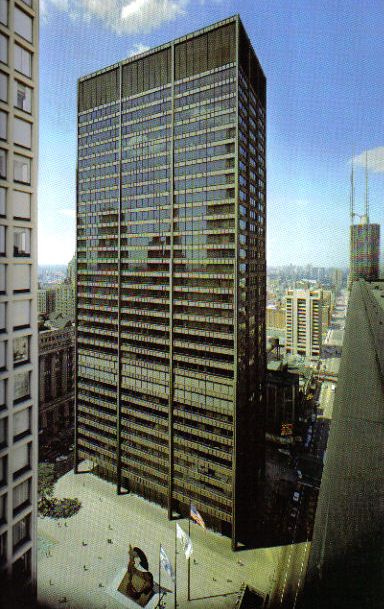

Corten is used in a very famous Picasso sculpture, as well as in the Barclays Center in Brooklyn, the U.S. Steel building in Pittsburgh, and the Daley Center Building in Chicago. Buildings clad in Corten start out a neutral gray color, then turn bright orange after a couple of rains (usually discoloring nearby gutters and sidewalks in the process), and after a year or so, settle into a dark brown.

A Picasso sculpture made of Corten, in two stages of oxidation.

The building behind the sculpture is clad in the same material.

I like to think of Rust, the programming language, as a similarly earth-toned protective layer, but over a piece of software instead of a piece of steel. The Rust language won’t win any beauty contests, but it is impervious to the various elements that may ravage a multi-threaded software program. Initially you might complain about Rust’s requirements and appearance, but in time it will feel normal, and eventually you’ll start having smug thoughts about the reduced costs of long-term maintenance. So there you have it — Rust, the Corten steel of modern programming languages.

I admit, I only started learning Rust a few weeks ago. But I had so much fun learning Go last month that I decided to write another programming language review. Rust 1.0 (final) is scheduled to be released tomorrow; what follows are my impressions of the Rust 1.0 nightly builds from reading docs and blog posts and writing a small library in Rust. YMMV, but hopefully you’ll enjoy the ride… in my AMC Pacer made out of Corten.

Iron and oxygen

Rust is a systems language. I’m not sure what that term means, but it seems to imply some combination of native code compilation, not being Fortran, and making no mention of category theory in the documentation. For what it’s worth, there’s a graduate student writing an operating system kernel in Rust, so whatever your definition of a systems language, Rust probably meets it.

The Wikipedia page on Rust lists sixteen “influences,” or sources of linguistic inspiration, half of which I had heard of before. Rust’s influences, in alphabetical order, are: Alef, C#, C++, Cyclone, Erlang, Haskell, Hermes, Limbo, Newsqueak, NIL, OCaml, Python, Ruby, Scheme, Standard ML, and Swift.

I like the idea of a polyglot programming language that takes ideas from many places, the more obscure the better. (I especially appreciate the nihilistic audacity of a language named NIL.) I’m not sure if the Wikipedia list is by any means complete or accurate, but I could detect features lifted from at least a few on the list. In practice, I found that while Rust’s functional influences are an ergonomic improvement over traditional systems languages, the gluttony of influences has created some tension in the language design between competing paradigms.

Here’s a quick example that may leave you scratching your head. Rust’s if statement is also an expression, so you can do something like this:

let x = if something > 0 { 2 } else { 4 };

Pretty great, right? But then, this won’t compile:

let x = if something > 0 { 2 };

The reason is that the phantom else clause evaluates to an empty tuple (), which is type-incompatible with 2. (Rust is strongly, statically typed with type inference.) Fair enough. Rather counterintuitively, the following line will compile:

let x = if something > 0 { () };

The if clause and the phantom else clause have the same type (), so the above line generates no qualms from the compiler. While I understand the appeal of an if expression, juggling functional and imperative programming styles in a strongly typed universe creates some oddities such as the above.

As another example of the clash of paradigms, although Rust supports systems-and-arrays programming, it has renounced C-style for loops (initialize, test, increment) in favor of The Iterator; the documentation goes even further, renouncing indexed accesses altogether in favor of index-less Iteration. Iterators are a great and reusable way to encapsulate your blah-blah-blah-blah-blah, but of course they’re impossible to optimize. The value of .next() remains a kind of holy mystery shrouded behind a non-pure function call, so anytime you have an iterator-style loop, the compiler is going to be left twiddling its thumbs.

As a simple demonstration, here’s an idiomatic Rust program that uses an iterator:

fn main() {

let mut sum = 0.0;

for i in 0..10000000 {

sum += i as f64;

}

println!(“Sum: {}”, sum);

}

It runs about five times slower than the equivalent program that uses a while loop:

fn main() {

let mut sum = 0.0;

let mut i = 0;

while i < 10000000 {

sum += i as f64;

i += 1;

}

println!(“Sum: {}”, sum);

}

The emphasis on iterators causes me extra sadness as Rust has contiguous-memory, natively-typed arrays, which would otherwise be perfect candidates for optimization, and Rust uses LLVM on the backend, which can optimize loops eight ways to next Sunday; but all that is thrown out the window in favor of The Noble Iterator. On the other hand, there’s a SIMD module in the standard library, so maybe they care about CPU design after all? I’m not sure. Rust feels like it’s being pulled between the demands of efficiency (exemplified by its native-everything and choice of LLVM) and the modern definition of beautiful code (which I take to be, “lots of chained function calls”).

One final example of Rust’s unresolved identity before I move on to more interesting things. In addition to iterators, Rust has lazy evaluation of lists, so you can do things like create an infinite list, as in the following:

let y = (1..)

…then chain together “iterator adaptors,” like this example from the documentation:

for i in (1..).step_by(5).take(5) {

println!(“{}”, i);

}

I’ve personally never needed a list larger than the size of the known universe, but to each his own. Sipping from the chalice of infinity may feel like you’re dining beneath the golden shields of Valhalla, but if you’re coming from not-a-Haskell, you might be surprised at what code actually gets executed, and when. But then Haskell programmers won’t necessarily feel at home, either; Rust does not have tail-call optimization, or any facilities for marking functions as pure, so the compiler can’t do the sort of functional optimization that Haskell programmers have come to expect out of Scotland.

The Rust language creators apparently felt the multi-paradigm tension, too, and have ripped out a number of previously-announced features during the design and development of Rust; I’ll discuss some ex-features (including green threads and garbage collection) later on. But for now I want to go over the fun stuff.

Rust: The fun stuff

Most tutorials on Rust start out by discussing Rust’s concepts of memory safety, pointer ownership, borrowed references, and the like. They’re important concepts in the language, but their immediate introduction is a bit like showing up at a party and being told in haste which bathrooms are off-limits. (Spoiler: most of them.) I generally like to take my coat off first.

So for the moment I’m going to skip all the stuff about memory safety and references and threads and tell you some of my favorite (other) parts about the language, in no particular order.

Native types in Rust are intelligently named: i32, u32, f32, f64, and so on. These are, indisputably, the correct names for native types.

Destructuring assignment: awesome. All languages which don’t have it should adopt it. Simple example:

let ((a, b), c) = ((1, 2), 3);

// a, b, and c are now bound

let (d, (e, f)) = ((4, 5), 6)

// compile-time error: mismatched types

More complex example:

// What is a rectangle?

struct Rectangle { origin: (u32, u32), size: (u32, u32) }

// Create an instance

let r1 = Rect { origin: (1, 2), size: (6, 5) };

// Now use destructuring assignment

let Rect { origin: (x, y), size: (width, height) } = r1;

// x, y, width, and height are now bound

The match keyword: also awesome, basically a switch with destructuring assignment.

Like if, match is an expression, not a statement, so it can be used as an rvalue. But unlike if, match doesn’t suffer from the phantom-else problem. The compiler uses type-checking to guarantee that the match will match something — or speaking more precisely, the compiler will complain and error out if a no-match situation is possible. (You can always use a bare underscore _ as a catchall expression.)

The match keyword is an excellent language feature, but there are a couple of shortcomings that prevent my full enjoyment of it. The first is that the only way to express equality in the destructured assignments is to use guards. That is, you can’t do this:

match (1, 2, 3) {

(a, a, a) => “equal!”,

_ => “not equal!”,

}

Instead, you have to do this:

match (1, 2, 3) {

(a, b, c) if a == b && b == c => “equal!”,

_ => “not equal!”,

}

Erlang allows the former pattern, which makes for much more succinct code than requiring separate assignments for things that end up being the same anyway. It would be handy if Rust offered a similar facility.

My second qualm with Mr. Match Keyword is the way the compiler determines completeness. I said before “the compiler will complain and error out if a no-match situation is possible,” but it would be better if that statement read if and only if. Rust uses something called Algebraic Data Types to analyze the match patterns, which sounds fancy and I only sort-of understand it. But in its analysis, the compiler only looks at types and discrete enumerations; it cannot, for example, tell whether every possible integer value has been considered. This construction, for instance, results in a compiler error:

match 100 {

y if y > 0 => “positive”,

y if y == 0 => “zero”,

y if y < 0 => “negative”,

};

The pattern list looks pretty exhaustive to me, but Rust wouldn’t know it. I’m that sure someone who is versed in type theory will send me an email explain how what I want is impossible unless P=NP, or something like that, but all I’m saying is, it’d be a nice feature to have. Are “Algebraic Data Values” a thing? They should be.

It’s a small touch, but Rust lets you nest function definitions, like so:

fn a_function() {

fn b_function() {

fn c_function() {

}

c_function(); // works

}

b_function(); // works

c_function(); // error: unresolved name `c_function`

}

Neat, huh? With other languages, I’m never quite sure where to put helper functions. I’m usually wary of factoring code into small, “beautiful” functions because I’m afraid they’ll end up under the couch cushions, or behind the radiator next to my car keys. With Rust, I can build up a kind of organic tree of function definitions, each scoped to the place where they’re actually going to be used, and promote them up the tree as they take on the Platonic form of Reusable Code.

Mutability rules: also great. Variables are immutable by default, and mutable with the mut keyword. It took me a little while to come to grips with the mutable reference operator &mut, but &mut and I now have a kind of respectful understanding, I think. Data, by the way, inherits the mutability of its enclosing structure. This is in contrast to C, where I feel like I have to write const in about 8 different places just to be double-extra sure, only to have someone else’s cast operator make a mockery of all my precautions.

Functions in Rust are dispatched statically, if the actual data type is known as compile-time, or dynamically, if only the interface is known. (Interfaces in Rust are called “traits”.) As an added bonus, there’s a feature called “type erasure” so you can force a dynamic dispatch to prevent compiling lots of pointlessly specialized functions. This is a good compromise between flexibility and performance, while remaining more or less transparent to the typical user.

Is resource acquisition the same thing as initialization? I’m not sure, but C++ programmers will appreciate Rust’s capacity for RAII-style programming, the happy place where all your memory is freed and all your file descriptors are closed in the course of normal object deallocation. You don’t need to explicitly close or free most things in Rust, even in a deferred manner as in Go, because the Rust compiler figures it out in advance. The RAII pattern works well here because (like C++) Rust doesn’t have a garbage collector, so you won’t have open file descriptors floating around all week waiting for Garbage Pickup Day.

On the whole, even leaving aside the borrowed references / bathroom situation, which I promise I’ll talk about in a minute, there’s enough fun stuff going on in Rust to keep me interested as a performant, systems-y language. Many of its features and design decisions should be emulated in other languages. But there are a few areas where I wish Rust had taken cues from other languages, that is, languages in addition to the Official Influences mentioned on the Wikipedia page.

The not-so-fun stuff

When I’m trying to get a grip on a new language, I can usually count on assignment as a rudimentary building block from which greater epistemological edifices may be built.

But assignment in Rust is not a totally trivial topic. Thanks to Rust’s memory checking, this program produces an error:

enum MyValue { Digit(i32) }

fn main() {

let x = MyValue::Digit(10);

let y = x;

let z = x;

}

The reason is that z might (later) mess with x, leaving y in an invalid state (a consequence of Rust’s strict memory checking — more on that later). Fair enough. But then changing the top of the file to:

#[derive(Copy, Clone)]

enum MyValue { Digit(i32) }

makes the program compile. Now, instead of binding y to the value represented by x, the assignment operator copies the value of x to y. So z can have no possible effect on y, and the memory-safety gods are happy.

To me it seems a little strange that a pragma on the enum affects its assignment semantics. It makes it difficult to reason about a program without first reading all the definitions. The binding-copy duality, by the way, is another artifact of Rust’s mixed-paradigm heritage. Whereas functional languages tend to use bindings to map variable names to their values in the invisible spirit world of no-copy immutable data, imperative languages take names at face value, and assignment is always a copy (copying a pointer, perhaps, but still a copy). By attempting to straddle both worlds, Rust rather inelegantly overloads the assignment operator to mean either binding or copying. The meaning of = depends, rather Clintonesquely, on the context.

A related oddity is that assignment, evaluated as an expression, returns (). I have no idea why that is the case, or how that could ever be more useful than returning the newly-assigned rvalue.

Another feature where a pragma seems out of place is inlining, although in this case I can’t really blame Rust for it. You can tell Rust you think a function should be inlined with a #inline pragma above the function (similar to C’s inline keyword). I am not an expert in compilation and linking, so maybe this is a dumb idea, but I’ve always thought it should be up to the caller to say which functions they’d like inlined, and the function should just mind its own business. (Did someone in the crowd just say “Amen”?)

Rust is being developed side-by-side with a browser rendering engine called Servo, written in you-know-what. Since the purpose of a browser rendering engine is to convert text files (i.e. HTML, CSS, and JavaScript) into something more attractive, it seemed like a safe bet that Rust would have excellent support for parsing things. Sadly, Rust is not a target for my favorite parser generator, and the lexers in Servo don’t look much better than C-style state machines, with lots of matching (switching) on character literals. There are newish libraries on GitHub implementing LALR and PEG parser generators for Rust, but on the whole, I am not left itching to write my next parser in Rust.

Concurrency in Rust is straightforward: you get a choice between OS threads, OS threads, and OS threads. When Rust was originally announced, the team had ambitions to pursue the multi-core MacGuffin with a green-threaded actor model, but they found out that it’s very hard to do green threads with native code compilation. (Go, for example, does native code compilation, but switches contexts only on I/O; Erlang can context-switch during a long-running computation, as well as on I/O, but only because it’s running in a virtual machine that can count the number of byte-code ops.)

Nonetheless, OS threads are very well understood (by… people), and so Rust has taken up a different challenge: eliminating crashes from otherwise traditional, “boring” multi-threaded programs. In the sense that Rust has abandoned its original vision in favor of pursuing more modest and achievable goals, Rust does feel rather grown-up. The team, I think, made the right decision focusing their efforts on memory safety; it lowers the learning curve for the target audience, and gives Rust a corner booth in the increasingly crowded marketplace for programming languages.

Of course, lest the Real Programmers in the audience begin to worry, it’s still possible to crash a Rust program. The unsafe keyword is a kind of fireman’s axe that you’re allowed to use if you promise you know what you’re doing; it lets you blit, bswap, and bit-twiddle away. unsafe is a good approach, balancing safety with flexibility, and in general limits application crashes to areas of code explicitly marked unsafe.

But as with the match keyword, which as in the example above can possess impossible beliefs about integers, the Rust compiler is at times more cautious than intelligent. A common pattern in parallel computing, for example, is to concurrently perform an operation over non-overlapping parts of a shared data array. As a simple (single-threaded) example, the following code will not compile in Rust:

fn main() {

let mut v = vec![1, 2]; // a mutable vector

let a = &mut v[0]; // mutable reference to 1st element

let b = &mut v[1]; // mutable reference to 2nd element

}

It’s a perfectly valid code, and accessing non-overlapping slices of an array is obviously safe. But the above program will cause the Rust compiler to hem and haw about competing reference to v.

However, Rust’s parallel-computation cabal has a kind of secret handshake called split_at_mut. Although the Rust compiler is unable to reason about whether specific index values overlap, it is willing to believe that split_at_mut produces non-overlapping slices, so this equivalent program compiles just fine:

fn main() {

let mut v = vec![1, 2];

let (a_slice, b_slice) = v.split_at_mut(1);

let (a, b) = (&mut a_slice[0], &mut b_slice[0]);

}

Most parallel computations end up using split_at_mut. In the interest of code clarity, it would be nice if the Rust compiler got rid of the split_at_mut secret password and could reason sanely about slice literals and array indexes. For the record, the Nim language manages to get this right, and the Rust folks might look to it for inspiration.

(It is also worth noting that processing non-overlapping slices in parallel is destined to come into mortal conflict with The Iterator, which is by its nature sequential. In that sense parallel processing is another victim of Rust’s multi-paradigm nature; the language would be easier to parallelize if it were more aggressively imperative, or more stringently functional, but by occupying the middle ground between The Array and The Function, it loses out on well-known techniques from both camps.)

Because of Rust’s strict memory-checking requirements, error messages play a rather crucial role in learning to write Rust code that is safe and error-free (the same thing, outside an unsafe block). Rust’s error messages are quite specific, perhaps to a fault; they tend to have a lot of jargon, which I suppose is necessary because Rust has its own private universe of memory-related concepts. But in learning Rust, I often felt lost, alone, and confused amid all the reprinted code and messages about block suffixes.

The error messages can also be, shall we say, overly effusive. For example, the official way to print a line is the println! macro; if you have some kind of error in the arguments, Rust treats you to a full exposition of how and where the println! macro was expanded, which is knowledge that I never particularly aspired to possess. Although the programming profession has, over the years, cultivated a reputation for providing little to no information about where things went wrong, it is in this case possible to have too much of a good thing. Perhaps when they’re done writing a browser engine, the next Big Rust Project should be an IDE that reads Rust error messages just draws a giant crimson arrow through my code that says “Move this line here, you dummy.”

C and back again

As my first Rust project, I thought it would be very funny to fork the Servo web rendering engine, and try to implement a terminal-based web browser à la Lynx or w3m. I figured I could get a rough proof-of-concept in a weekend.

Several naps later, I realized that the idea of working on a web browser led inevitably to thoughts about the meaning of life, so I decided instead to take on, well, a more modest and achievable project. Thus was born, in its place, Excalibur: Destroyer of Spreadsheets.

(Excalibur is the second installment in Terminally Insane Programming, my neo-Gothic terminal revival movement. The first project was Hecate: The Hex Editor From Hell, now available in source code form. One day I hope these projects will form either a trilogy, a trilogy of trilogies, or ideally, a trilogy of trilogies of trilogies, at which point the earth will be engulfed in calamities and the true nature of the universe will be revealed to mankind as a one-line Mathematica program.)

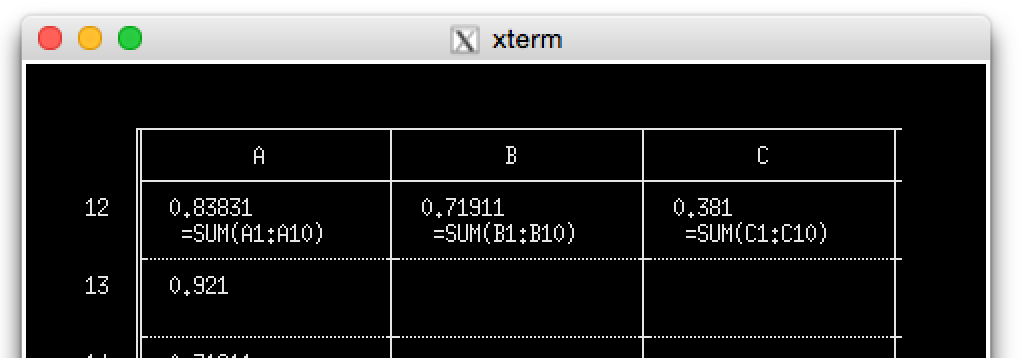

Excalibur’s raison d’etre is this: I do a lot of work with spreadsheets, and often want to see if all the formulas in a spreadsheet look right. To see a formula in any spreadsheet program — and they all use the VisiCalc interface from 1975 — you have to put your cursor over the cell and look at the top of the screen, which means you can only inspect one formula at a time. I’d like a program where the formulas appear below each value, something like this:

(ASCII art done in Vim, my new favorite mockup tool.)

I figured this would be a good test of interfacing with C libraries — libxls for reading spreadsheets, termbox for making a terminal program — so I was off to the races. And I don’t mean data races.

The first task was to wrap up libxls, that is, expose the library functions for reading Excel spreadsheets to Rust somehow. Rust has a decent FFI mechanism, so I didn’t have to actually write any glue code in C. However, at times I felt like I was jumping through an unnecessary number of hoops just to call a C function and convert its return value to something Rusty.

Definitions for standard C type (int, long, void *) are nested deep into packages, and can be hard to find. For example, the C int and char types are located, rather improbably, at libc::types::os::arch::c95::c_int and libc::types::os::arch::c95::c_char. The symbols are also accessible from the top-level libc namespace, so you don’t actually have to type out a complicated module hierarchy every time, but the online documentation only lists the specific types at their five-layers-deep locations. If you want a full list, bring a hard hat and coveralls.

Rust and C have somewhat different concepts of strings, and so converting them is another minor chore. Here’s a function that calls xls_getVersion(), which returns a C string, and converts it to a Rust String:

pub fn version() -> String {

let c_string = unsafe { CStr::from_ptr(xls_getVersion()) };

str::from_utf8(c_string.to_bytes()).unwrap().to_string()

}

I found a variant of that code with a big green check next to it on StackOverflow. I understand what it’s doing, but five function calls (from_ptr, from_utf8, to_bytes, unwrap, to_string) just to convert a C string to a Rust string seems like an excessive amount of ceremony, at least for a language that keeps using the word “systems” on its website.

Once you get the types squared away, actually calling C functions is straightforward, albeit somewhat tedious. Rust won’t read C header files, so you have to manually declare each function you want to call by wrapping it in an extern block, like this:

extern {

fn xls_getVersion() -> *const libc::c_char;

}

That part is easy, but things get a little trickier if a library’s C API expects you to access struct fields directly rather than use accessor functions. In that case you have to mirror all of the structs manually, as with this C struct in libxls:

struct st_sheet_data {

DWORD filepos;

BYTE visibility;

BYTE type;

BYTE* name;

}

And its doppelgänger in Rust:

#[repr(C)]

struct NativeWorksheetData {

filepos : u32,

visibility : u8,

ws_type : u8,

name : *const i8,

}

The #[repr(C)] bit at the top tells Rust that you want a Rust struct that is bit-compatible with the same struct declared in C. It’s a great language feature, and there’s a variant that lets you get a “packed” structure (a common pattern in blit-heavy C libraries). In practice, though, the feature has its limits. For example, the libxls API has an array-of-structs in a few places (array of worksheets, array of rows, array of cells, etc.); because Rust doesn’t believe in pointer arithmetic, I found myself manually writing the pointer arithmetic logic, which was totally unsafe, and non-trivial on account of how C aligns structures and fields in memory. The resulting code feels like a crash waiting to happen.

With all the pointer-hopping I was doing, I was surprised to find out how the unsafe keyword actually works. My initial belief was that a function that does something unsafe must, itself, be unsafe, but in reality it’s more of an honor code system. A function that does something unsafe (e.g. pointer arithmetic or calling C libraries) can be exported as safe. This may come as a surprise if you are using someone else’s Rust libraries, as it’s possible they’re doing something unsafe under the hood and just not telling you about it.

Of course, the lax rules about un-safety are rather convenient if you want to do something unsafe in a trait (interface) implementation. If the unsafe keyword propagated up the call chain, then you’d be unable to implement RAII or iterators with any unsafe logic, because in Rust, safe and unsafe functions have incompatible method signatures, and RAII and iterator methods are marked safe. So I’m grateful that I can construct a Sheet Iterator, a Row Iterator, and a Cell Iterator in my spreadsheet library; they purport to be safe, and are eligible for all the abstract wonders of Iteration, but in reality they call out to C code that could incinerate the user’s hard drive. In that sense, the unsafe keyword is merely advisory signage, like those warnings near prisons about picking up hitchhikers.

Actually building a Rust program that links to C involved one final, minor annoyance. The Rust documentation advises you to build things with Rust’s do-it-all package manager, Cargo, but until a few days ago, Cargo didn’t understand linker flags, so I couldn’t tell it where to find libxls on my hard drive. The issue has been sort-of addressed with the new cargo rustc command. My library is building, for now, and I’ll refrain from any invective about it.

Once my libxls wrapper was finished, built, and running, I was pleased with the result. Rust lets me call C functions and access C structs, but then expose the results at a higher level of abstraction with iterators and automatic handle-closing via RAII. I particularly liked using Rust’s type system to express the kinds of values represented by a spreadsheet cell (StringValue, DoubleValue, FormulaValue, and so on); as a consequence, Rust client code for libxls is quite a bit cleaner than the equivalent C code, which requires a lot of branching to extract different kinds of data. For the benefit of posterity, I’ve put the rust-xls library code on GitHub, although it probably won’t work for you until I get a tiny libxls patch accepted upstream.

By way of confession, despite my best intentions, I did not actually get around to implementing Excalibur: Destroyer of Spreadsheets. An exposition of that experience will have to wait for another installment. Part of the reason is that I don’t actually need Excalibur all that badly, but the other part is that it took me longer than expected to feel comfortable writing Rust code. Although Rust looks like just-another-curly-brace-language, it can be surprisingly difficult to write correct (compilable) code. At every turn, you must satisfy, and make periodic sacrifices to, the gods of memory ownership.

The bathroom at the end of the universe

Thus far I have deliberately avoided talking too much about Rust’s memory-ownership model. The reason is that learning it is a pain, and it can feel like a set of mysterious rules as to when variables can be assigned or used as arguments to function calls. I wondered for a while whether Rust’s hype and insurgent popularity was purely due to how difficult it was to write — a sort of fixed-gear bicycle for the computing world — and whether Rust programs achieved memory safety simply by being bathed in the tears of programmers.

But after working for a while within The Memory Ownership Rules, I’ve developed a kind of grudging respect for them. Rather inundate you with code samples here, I’ll try to explain how memory ownership works at a high level, so when you sit down with a proper tutorial, you (hopefully) won’t think that Rust’s memory requirements are totally arbitrary and dumb, like I did at first.

To understand where The Rules come from, it helps to understand how other languages manage memory.

In modern languages, memory management usually happens with garbage collection or reference counting. Garbage collection is convenient because the programmer can usually bang out code without thinking too hard about memory allocation. Reference-counting requires a little more work on the part of the programmer, but has more predictable performance without those nasty CPU spikes while the garbage collector cleans house.

Rust initially had a special kind of garbage-collected variable, denoted with @, but the designers got rid of it in the interest of simplifying the language. Rust does have reference-counted data types in the form of Rc and Arc, but most memory is actually not refcounted — at least not at run-time.

Rust’s memory is essentially reference-counted at compile-time, rather than run-time, with a constraint that the refcount cannot exceed 1. Blocks of code “own” the references (Rust calls them bindings), and in the normal case, objects are deallocated as they go out of scope. To change ownership of the reference (binding), you have to either “move” the reference to another variable or block of code, or make a copy of the underlying data. Attempting to access a variable that has been moved results in a compiler error.

In normal (run-time) reference-counted languages, the language needs some kind of support for weak references to avoid reference cycles and therefore memory leaks. Weak references do not contribute to the reference count, so they must be carefully managed in order to prevent accessing a deallocated object. Typically, an “owning” object will have a strong reference to an “owned” object, and the owned object will have a weak back-reference to the owner. (It’s up to the programmer to ensure that the owning object scrubs its name off of all its stuff before it gets deallocated.)

Weak references are analogous to Rust’s concept of “borrowing”. The compiler ensures that a weak reference (borrower) never outlives a strong reference (owner), so your code will never be able to access an deallocated object. Rust goes a bit further with “lifetimes”, which lets you express the longevity of a reference in terms of other references — usually in the context of a function call or data structure. It sounds rather abstract, but in practice it just means something like “this function returns a value that lives as long as the thing you passed it”, which ought to be a cause for joy for anyone who has worked on a large, pointer-happy C++ or Objective-C program. If you’re feeling lazy, there’s a special lifetime called static, which means the thing lives as long as the program is running.

By doing all the reference accounting at compile-time, Rust has “zero-cost abstractions”, including structs that are bit-compatible with C (with #[repr(C)], as above), and extremely predictable performance. By having a maximum refcount of 1 — which embodies the Rust’s idea of ownership — Rust heads off all kinds of problems in concurrency, where (otherwise) multiple threads with references to the same object might cause data races, and produce the kinds of subtle bugs that plague multi-threaded C++ programs.

I’m not sure if the ownership rule is actually helpful in single-threaded contexts, but it at least makes sense in light of Rust’s green-threaded heritage. Even though the green-threaded architecture has been abandoned, the Rust designers have been thinking carefully about memory safety in concurrent environments since the language’s inception, and this thinking is apparent in the memory model.

So there you have it, at least as I understand it — the Rust memory model is compile-time reference-counting, with extra rules in place to prevent data races, and added facilities (Rc, Arc) for doing old-fashioned reference-counting at run-time. The memory model imposes constraints on the code you write, and it takes time to come to terms with all the rules, but the resulting code has a kind of structural clarity that is lost in the equivalent C++ programs, where invalid pointers could lurk anywhere. I haven’t worked with the language enough to give a personal bug-count testimonial, but I am inclined to believe Mozilla’s claim that 50% of the security vulnerabilities found in Firefox would have been mitigated by Rust. The language feels like a metal that is harder to work with, but once it is beaten into shape, lasts that much longer.

More is less

And yet I keep thinking back to the Daley Center Building, that brown Corten structure sitting behind the Picasso sculpture in the pictures that I showed you at the beginning of the article.

The Richard J. Daley Center Building, formerly the Chicago Civic Center.

The Picasso sculpture is visible at the bottom of the photograph.

Unveiled in 1965, the building was designed by a student of Mies van der Rohe, the famous “less is more” messiah of architectural modernism. Like the best works of Mies, the Daley Center has a kind of spare, Spartan, almost severe beauty to it. Every line serves a purpose, and nothing feels confused or out of place. The building adheres to the “form follows function” mantra of modernism: the underlying structure is evident from the outward appearance, and nothing is shrouded or hidden away. You know it is Corten because it is brown; it was once a shameless shade of bright orange, as oxidation is simply a reality of the material.

I have a nagging sadness about the Rust language because in some ways, it comes very close to expressing the formal purity of that building. It makes the reality of the computer evident in the structure of the language (native types, structs, pointers, arrays), and tames that reality so that it does not present the traditional dangers of systems programming (via Rust’s compile-time checks). The small amount of Rust code that I wrote was remarkably clear in its purpose and intent, and was satisfying in its safety guarantees. (That is, apart from my Evel Knievel-style pointer jumping discussed earlier.)

But then reading more example code, I can’t help but think back to Rust’s fundamental identity problem. It’s really not sure if Rust wants to be a functional language or not, and I have to wonder if the emphasis on iterators, builder patterns, and (semi-)functional programming is a kind of pragmatic response to the constraints imposed by Rust’s memory-ownership rules. My fear is that newcomers to the language will try to get around the demands of the borrow-checker simply by writing things in a pseudo-functional, lots-of-chained-method-calls style, for which Rust is not all that well-designed, and which spurns, rather than celebrates, the realities of The Machine.

And that, I think, is the heart of the problem with Rust, in spite of its many virtues. If a large piece of software is the computing equivalent of a skyscraper, then you might think of a systems language as a style (school) of architecture. The major breakthrough of Mies’s International style was to show what heights could be achieved simply by respecting, and celebrating, the physicality of glass and steel. Some people loved the aesthetic, others despised all the black and brown boxes jutting into their skylines; but regardless of opinion, the style had a kind of sure-footedness and sense of identity that is hard not to admire. That sure-footedness is lacking in Rust. It feels like it has two systems languages inside it — one functional, one imperative — and they would achieve fuller expression if only they didn’t have to bend to each other. As long as Rust is trying to accommodate both The Array and The Function, it will never fully exploit the capabilities of The Machine, and its potential heights will remain undiscovered. It won’t have the Sartrean self-confidence of a more narrowly defined language, and, in practical terms, I’ll never quite be sure what the = operator is doing.

In that sense, perhaps we should try to forget my faux etymology about Corrosive Tensile steel and minimalist skyscrapers, and look back to that IRC chat log for the more accurate origin of Rust’s name. A rust is a fungus with up to five distinct morphologies, or forms. (Humans have two: a sperm or egg, then that angsty ball of flesh.) I’ll let Rust’s creator explain the rest:

<graydon> fungi are amazingly robust

<graydon> to start, they are distributed organisms. not single cellular, but also no single point of failure.

<graydon> then depending on the fungi, they have more than just the usual 2 lifecycle phases of critters like us (somatic and gamete)

<jonanin> ohhh

<jonanin> those kind of phases

<graydon> they might have 3, 4, or 5 lifecycle stages. several of which might cross back on one another (meet and reproduce, restart the lineage) and/or self-reproduce or reinfect

<jonanin> but i mean

<jonanin> you have haploid gametes and diploid somatic cells right? what else could there be?

<graydon> and in rusts, some of them actually alternate between multiple different hosts. so a crop failure or host death of one sort doesn’t kill off the line.

<graydon> they can double up!

<graydon> http://en.wikipedia.org/wiki/Dikaryon

<graydon> it’s madness. basically like someone was looking at sexual reproduction and said “nah, way too failure-prone, let’s see how many other variations we can do in parallel”

<jonanin> I can’t really understand that lol.

You’re reading evanmiller.org, a random collection of math, tech, and musings. If you liked this you might also enjoy:

Get new articles as they’re published, via LinkedIn, Twitter, or RSS.

Want to look for statistical patterns in your MySQL, PostgreSQL, or SQLite database? My desktop statistics software Wizard can help you analyze more data in less time and communicate discoveries visually without spending days struggling with pointless command syntax. Check it out!

Back to Evan Miller’s home page – Subscribe to RSS – LinkedIn – Twitter